Why Your AI Tools Are Failing: The Knowledge Foundation Most Businesses Skip

Most businesses plug in AI tools and expect results. What actually works is building a context wiki first - the knowledge foundation that turns generic AI into an operator that knows your business inside out.

Skip the read. Chat with this article.

Most AI projects fail because businesses skip the knowledge layer. They get a Claude or ChatGPT subscription, ask it to automate outreach or write emails, and get generic output that any competitor could produce with the same prompt. Then they conclude AI doesn't work. The real problem is that no one built an AI knowledge base for the business - the foundation that separates useful AI from expensive autocomplete.

95% of AI Projects Fail. The Reason Is Simpler Than You Think.

The MIT NANDA Project found that 95% of generative AI pilots at companies fail to deliver measurable business impact. RAND Corporation puts the broader AI project failure rate at over 80% - which is twice the failure rate of non-AI technology projects. And Gartner predicts that 60% of AI projects unsupported by AI-ready data will be abandoned by the end of 2026.

95%

of generative AI pilots fail to deliver measurable business impact

Source: MIT NANDA Project (2025)These are brutal numbers. The majority of businesses trying to use AI are getting burned. But when you look at why, the same pattern keeps showing up. It's not that the models are bad. Claude, GPT, Gemini - they're genuinely impressive technology. The issue is that businesses treat AI like a plug-and-play tool. Subscribe, point it at a task, expect results.

That's like hiring the smartest person you've ever met and giving them zero onboarding. I wrote about why recruitment automation fails before - the tech is rarely the problem. The foundation is. No context about your clients. No knowledge of your processes. No access to your data. Just "figure it out." Of course they're going to underperform. And of course you're going to think the hire was a mistake.

What Happens When AI Operates Without Your Business Context

AI defaults to general knowledge when it doesn't have yours. And general knowledge is the definition of generic.

I see this constantly with recruitment agencies. They have years of knowledge trapped in people's heads. They know how specific clients like their shortlists formatted. Which hiring managers actually respond and why. What salary ranges actually close in niche roles. All the nuances in their industry that separate a successful placement from a wasted submission.

But none of it is documented. So when a recruiter leaves, that knowledge walks out the door with them. And when they try to deploy AI tools, those tools operate in a vacuum - producing the same output that any competitor could get by typing the same prompt into a free ChatGPT window.

70%

of critical operational knowledge is tribal - never written down, never formally taught

Source: OEM Magazine70% of critical operational knowledge is tribal. It exists in Slack threads, in people's instincts, in the way someone just "knows" which candidates will work for which clients. None of that is accessible to AI.

$31.5B

lost annually by Fortune 500 companies due to failure to share knowledge

Source: Iterators / FortuneFortune 500 companies lose an estimated $31.5 billion per year from this failure to capture and share knowledge. For smaller businesses, the impact is proportionally worse - one key departure can set an agency back months.

I've lived this. When I was working in recruitment, we spent six months building out a contracting team. Hired specialists, developed the vertical, started generating revenue. But we never documented the processes properly and the team always felt like a stepchild compared to the perm desk. Eventually the entire unit left and became a competitor. Different model, different target customer - but it was a growing revenue stream gone overnight. And all the category expertise those people had built up walked out the door with them. No handover. No playbook. Just gone.

That experience is honestly one of the reasons I'm so obsessive about documenting everything now. Knowledge that only exists in people's heads is a liability, not an asset.

And this problem is everywhere. 68% of enterprise data remains completely unanalyzed. Not because the data doesn't exist, but because it's trapped in silos that neither humans nor AI can access.

When your AI has none of this context, you get exactly what you'd expect. Hallucinated answers. Generic suggestions. Output that sounds impressive but contains zero business intelligence. Then you conclude that AI doesn't work for your use case. It does work. You just didn't give it anything real to work with.

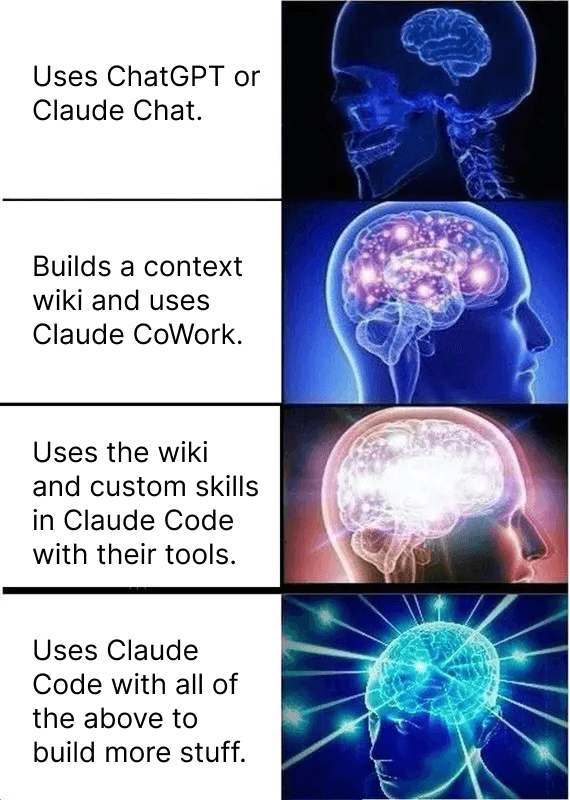

The Expanding Brain of AI Adoption

There's a meme that perfectly captures how businesses progress with AI adoption. I posted it on LinkedIn recently and it landed hard because everyone immediately recognized which level they were stuck at.

Level 1: You're using ChatGPT or Claude Chat. Typing questions into a chat window. Getting answers. Occasionally impressed, often disappointed. This is where the vast majority of businesses stop - and where they form the opinion that AI "doesn't really work" for their specific situation.

Level 2: You build a context wiki. You start documenting your business knowledge in a structured format and use tools like Claude that can reference it. Now the AI knows your ICP, your processes, your brand voice. The output quality jumps dramatically because it's no longer guessing - it's drawing from your actual playbook.

Level 3: You go further. Custom skills with your tools connected. The AI can pull from your CRM, your analytics, your email platform - not just stored knowledge but live data. It starts doing things for you, not just answering questions.

Level 4: Full AI Operating System. The wiki, the skills, the tool connections, all working together and building more on top of itself. New insights get compiled back into the wiki. New workflows get created as reusable skills. The system compounds.

Most people complaining about AI are stuck at Level 1 and judging the entire technology by its worst-case scenario. That's like test-driving a car in first gear and concluding it's too slow for the highway. We mapped out a full 5-level maturity model for recruitment automation and the wiki is the unlock that separates Level 2 from Level 3+.

What Is a Context Wiki and Why Does It Change Everything?

A context wiki is a structured knowledge base that captures everything your AI needs to know about your business to operate at an expert level. It's not a Google Doc. It's not a Notion workspace full of meeting notes nobody reads. It's a compiled, cross-referenced, auto-growing system that serves as the foundation for every AI workflow you build.

Andrej Karpathy - formerly OpenAI and Tesla - popularized a framework for this that resonated with thousands of practitioners. Three layers working together. Raw sources go in: call transcripts, SOPs, case studies, articles, whatever your unprocessed material looks like. An LLM compiles these into structured wiki articles with summaries, cross-references, and key takeaways. And a configuration layer tells the AI how to navigate and use all of it.

3x

higher ROI on AI initiatives for companies with mature data and knowledge practices

Source: Unframe AI / Industry ResearchAnthropic now calls this discipline "context engineering" - defined as curating and maintaining the optimal set of information during AI inference. It's a shift from prompt engineering (asking the right question) to context engineering (giving AI the right knowledge to draw from no matter what question you ask).

The practical difference is massive. When you ask general AI "what outreach approach should I use for healthcare recruiters?" you get a Wikipedia-level answer. When you ask AI wired into your context wiki the same question, it pulls your actual campaign data, references results from similar clients in that vertical, and suggests an approach based on what has actually worked. Not theory. Data.

That gap is where most of the ROI lives. And most businesses haven't even started closing it.

How We Built Ours (And What It Actually Does)

Our context wiki - we call it ClaudeBrain - is the foundation of everything we do at Automindz. It currently holds 41 articles across 7 domains, roughly 145,000 words of compiled business intelligence. Case studies, client patterns, market research, sales playbooks, content frameworks, competitor analysis - all structured and cross-referenced so any AI workflow can pull the exact context it needs.

It grows automatically. Every new client engagement, call transcript, research session, and daily operations log feeds raw material into the system. A compilation pipeline reads these sources and updates wiki articles - adding new data points, flagging outdated information, connecting related insights across domains. The wiki from six months ago is a fraction of what it is today, and six months from now it'll be significantly richer. It compounds.

But the wiki alone isn't the full picture. What makes it actually useful in daily operations is that it's wired into our live tools - our CRM, analytics platforms, email, calendar, databases - through connectors that give AI real-time access to business data. The wiki provides the context for how to interpret and act on that data. The tool connections provide the data itself. Together they turn a static knowledge base into a living operating system.

Here's what that combination looks like in practice. I can type "How's our web traffic?" and Claude generates a 2-page report that pulls live data from Google Search Console, Google Analytics, our database, our CRM, and Calendly - but it interprets all of that through the lens of our wiki. It knows our conversion benchmarks, our content strategy, which blog topics map to which ICP segments. So the output isn't just numbers - it's numbers with context, specific optimization suggestions, and blog topic ideas backed by keyword data and our actual content roadmap. That takes under two minutes.

Or I can ask "Build me a list of Founders I haven't reached out to over the past 90 days" and it pulls every contact with no logged interaction in that timeframe from our CRM, runs a qualification check against ICP criteria defined in the wiki, and hands me a ranked list with suggested next actions and campaign ideas based on what has actually worked before. The CRM provides the raw data. The wiki tells it what "qualified" means for us and which outreach sequences convert.

That's the key insight most people miss. The wiki and the tool connections aren't separate things - they're two halves of the same system. The wiki without live data is just documentation. Live data without the wiki is just dashboards. Put them together and the AI can actually operate your business instead of just answering questions about it.

Imagine running through hundreds or thousands of data points yourself to answer those questions. That used to take hours. Now it happens in minutes.

The Three-Layer AI Operating System

If you're serious about making AI work for your business, think of it as a five-layer operating system. Each layer builds on the one below it. Skip one and the layers above it break.

Layer 1: The Context Wiki (Knowledge Foundation)

This is where everything starts. Your ICP and qualification criteria. Your best SOPs and playbooks. Your brand voice and communication guidelines. Client-specific knowledge that currently lives in people's heads. Competitive intelligence. Industry-specific insights that no one outside your team has documented.

We always tell our clients: it's not your people, it's your system. And the system starts with capturing what your people know before it can do anything useful with that knowledge.

Think of this layer as the employee handbook for your AI. A brilliant new hire with access to your entire operational knowledge will outperform a genius with no context every single time. AI is no different.

Layer 2: Tool Connections (The Nervous System)

Your CRM, email platform, analytics, calendar, databases - connected so the AI can access real-time data, not just stored knowledge. This is what turns a smart assistant into an operational tool. It can check your pipeline, pull performance metrics, scan your inbox, and build reports using live data instead of asking you to copy-paste screenshots.

Layer 3: Skills and Instructions (The Playbook)

Reusable skill files that encode decision frameworks and multi-step processes. A morning briefing that pulls from five data sources. A content creation pipeline. A pre-call research brief. A pipeline health check. Each skill is a documented recipe - it knows what to do, which tools to use, what data to pull, and what the output should look like. They're built on top of the wiki, so every skill draws from the knowledge base and the output is always grounded in your specific context. For a practical breakdown of how Skills, CLAUDE.md, MCP, and Subagents work together, see our Claude Code beginner's guide.

Layer 4: Workflows (How Humans and AI Interact)

This is where the system meets actual work. Not every workflow looks the same - and it shouldn't. We run three types:

Automated workflows run on a schedule or trigger without anyone touching them. Data syncs, pipeline monitoring, content performance tracking. Set it up once, it runs in the background.

AI-augmented conversational workflows are the ones where a human asks the system to do something and reviews the output. Writing a blog post, generating a proposal, building a prospect list. The AI does the heavy lifting, the human steers and approves. Most of the daily work lives here.

Fully agentic workflows run autonomously with no human in the loop. We have one called idle-learn that continuously researches topics relevant to our business - AI trends, competitor moves, market shifts - compiles findings into structured summaries, and files them back into the wiki. It runs on its own and the knowledge base gets smarter without anyone manually feeding it. That's the compound effect in action.

The key insight is that not everything needs to be fully autonomous. Most of the value comes from the conversational layer - a human with a good system is faster than either one alone.

Layer 5: The Human Layer (Personal Context)

This is the top of the stack and the part most people overlook. Every person who uses the system has their own personal configuration file - a claude.md - that acts as their individual context layer on top of everything else.

My claude.md tells the AI who I am, what my responsibilities are, how I like to communicate, which tools I use most, and all the nuances specific to my role. Our Head of Sales has a completely different one - focused on pipeline, deal stages, call prep, and his outreach style. When he asks the system to draft a follow-up email, it writes like him, not like me. Same wiki, same tools, same skills - but personalized output because the human layer is different.

This is what makes the system scale across a team without everyone getting the same generic output. Each person's context file customizes the entire stack for their role, their preferences, and their way of working. New team member joins? Give them a claude.md, connect them to the shared wiki, and they're operating at full speed on day one instead of spending weeks figuring out how things work.

Here's the difference the full stack makes in practice:

| Scenario | Without the Stack | With the Full Stack |

|---|---|---|

| "Write an outreach email" | Generic template anyone could produce | Uses ICP match data, industry context, references your best-performing sequences, written in your voice |

| "Summarize my pipeline" | Asks what CRM you use | Pulls live deal data, flags stale opportunities, suggests specific follow-ups based on what's worked before |

| "Create a blog post" | SEO-optimized fluff with no substance | References real client results, uses your brand voice, cites internal data, follows your content strategy |

| "Research this prospect" | LinkedIn summary and company description | Cross-references CRM history, identifies mutual connections, suggests relevant case studies, preps talking points for your style |

The layers compound. The wiki makes the tools smarter. The tools make the skills more capable. The workflows determine how work actually gets done. And the human layer personalizes everything so each team member gets output that fits how they operate. It's a flywheel, not a one-time setup.

Where to Start (The First 5 Things to Document)

You don't need 145,000 words on day one. You need enough context for AI to stop guessing and start being genuinely useful. Here's where to begin:

1. Your ICP definition and qualification criteria. Who are you selling to? What makes a lead qualified? What disqualifies them? If you can't articulate this clearly in writing, your AI definitely can't figure it out on its own.

2. Your top 3-5 revenue-generating workflows. The processes that actually make money. For a recruitment agency, that's sourcing, outreach, screening, placement. For a SaaS company, it might be lead qualification, demo prep, onboarding. Document the steps, the tools involved, and the decisions made at each stage.

3. Your brand voice and communication guidelines. How do you talk to clients? What tone do you use? What words do you never say? Without this, AI writes in its default "corporate blog" voice - which is the same voice everyone else's AI also produces. That's why everything online is starting to sound the same.

4. Client-specific knowledge. The stuff trapped in people's heads right now. Which clients are price-sensitive. Which decision-makers prefer email over phone. What objections come up most frequently and how you handle them. This is the highest-value knowledge to capture because it's the most at-risk of being lost when someone leaves.

5. Your tool stack and data flows. Where does your data live? Which systems need to talk to each other? What information is currently being manually copy-pasted between platforms? AI can't use tools it doesn't know about and can't connect data sources it can't see.

Frame it this way: document what you'd tell a brilliant new hire in their first week. The things they'd need to know before they could do their job without asking you every five minutes. That's your minimum viable wiki. If you want a structured 30-day version of this, we published a full spreadsheets-to-systems playbook that walks through the transformation step by step.

The Results When You Get the Foundation Right

We're a 5-person team managing 35 active clients. That ratio only works because the system carries the operational load. Every team member has access to the same compiled knowledge, the same connected tools, the same reusable skills and workflows. The AI handles the repetitive, data-heavy work. The humans focus on relationships, strategy, delivery and the judgment calls that actually need a brain.

One solo construction recruiter we work with generated over $100K in placement fees within 30 days of going live. Not because the AI was smarter than other AI - same Claude Code that anyone can subscribe to. But because the system didn't start from zero. The wiki already had construction-specific hiring signals, outreach sequences proven in that vertical, and candidate engagement patterns from similar implementations. The context did the heavy lifting.

40-60 min

saved per employee per day when AI is properly implemented with business context

Source: Goldman Sachs / OpenAI (2026)Goldman Sachs found that AI saves employees 40 to 60 minutes per day when properly implemented with context. And companies with mature knowledge practices see 3x higher ROI on AI initiatives compared to those who skip the foundation. The difference isn't the technology. It's the preparation.

What makes it really interesting is the compound effect over time. Month 1, the wiki has your basics. Month 6, it has patterns from dozens of campaigns, hundreds of client conversations, and thousands of data points. The AI gets meaningfully better not because the model upgraded but because it has more of your context to draw from. Every engagement adds knowledge. Every workflow execution generates data that makes the next one sharper.

So if you're starting fresh with AI in your business, the sequence matters more than the tools you pick:

- Build the context wiki first

- Connect your tools so AI can access live data

- Build skills and workflows on top of that foundation

Skip step one and you're just adding another tool to an already overcrowded stack. Get it right and you're building something that compounds every single day.

“Everyone can get a Claude subscription today and access the same level of intelligence. What makes all the difference is the knowledge and the connections you equip it with. You can't expect AI to do a proper job if it operates in a silo.”

Frequently Asked Questions

Related Articles

Written by

Niklas Huetzen

CEO & Co-Founder

Niklas leads Automindz Solutions, helping recruitment agencies across the globe build AI-powered pipeline systems that deliver warm meetings on autopilot.

Connect on LinkedIn →Free Resources

Want more like this?

Our Resource Hub is packed with free guides, templates, and tools to help you build AI-powered recruitment pipelines.